| Interactive Swarm Space | |||||||||||

|

|

||||||||||||||||||||||||

|

Who uses ISOSynth ISOSynth is intended for composers and audio experimentalists. The C++ notion above might drive you into thinking: Hey, I am not a programmer! So how am I supposed to make use of this thing? Well, the good news is: You don't have to have an higher education in computer science to use ISOSynth, because this very documentation will attempt to lead you through all necessary steps in order to implement your own ideas and concepts. For each technical detail, there will be some well explained example code, which you can use and alter any way you like. However for those who would like to get in deep and really write their own ISOSynth units, there are two important links for you: An online introduction to C++ (Bruce Eckel's "Thinking in C++") and a comprehensive reference documentation of the ISOSynth - done automatically using Doxygen. How to get started In order to get your own coding avalanche started, we have put together a downloadable package that includes all the necessary tools, as well as an example project for XCode to get you started. All you have to do is follow the instruction on the "Developer Installation Procedure" on the installation page. Inside the ISO XCode project folder (.../iso_apps/iso_synth_app/trunk/project) you will find the default project file (iso_synth_app.xcodeproj). Make sure you have the newest XCode version and open this xcodeproj File.

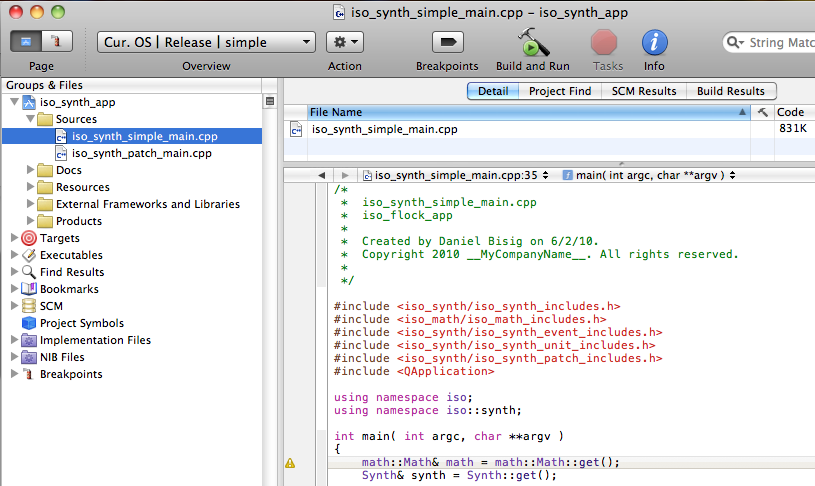

Hello World Our first example will do the audio synthesis equivalent of a Hello World program, it will simply play a sine wave for a couple of seconds. If you open the source code file "iso_synth_simple_main.cpp" inside of our example XCode project (if necessary go back to the Installation instructions).

#include Let's walk through, step by step. The code starts with a series of #include statements that tell the compiler what other classes to include when compiling (some of these will in turn also include further classes and so on). Next, the two namespace statements are a measure against the occurence of the same name in multiple classes, a situation which will confuse your compiler into oblivion. But if each name is part of a namespace, the two names won't be confused. By stating "using namespace ...." we don't have to explicitely mention the namespaces in our code. Otherwise we would have to write iso::synth::WaveTableOscil instead of simply WaveTableOscil. Next up, the main() method, starting point for ANY application you'll write in a lot of the higher-level programming languages. This main() method does at first glance two things: Create a new Synth object and then try> to build and connect the audio synthesis units. If it fails in doing so, it will throw an exception which is caught by one of the two catch clauses. This is basically a programmer's way of saying: It will complain that something went wrong and the complaint is handled by two specific "complaint departements" (there could be more of them, when there are more exception classes that could be thrown in this context). If an exception is not caught, the program would simply crash! However what's really important for us is the content of the try{ ... } bracket: QApplication a(argc,argv); will initialize a new QT Application. We are using QT for a lot of the window functionality, which is actually more important for ISOFlock than for ISOSynth. WaveTableOscil* oscil = new WaveTableOscil("oscil", "sinewave"); - this will create a object called oscil and initialize it with two parameters: "oscil", the internal name of the object and "sinewave", the name of the wavetable that's beeing used. So basically this wavetable called "sinewave" contains the shape of a sine wave over one cycle. It's uncommon to create a sine wave audio from a real-time calculated trigonometric sine function, that would just take up way to many computing resources. It's much faster to calculate it once then store this standard shape in a table. Also notice the little "*" after the object type. Without going too much into the details of pointers, let's just say so much: Putting a * after the type will tell the compiler that "oscil" will point to the memory address of this WaveTableOscil. You may then later pass this pointer to other classes, which is much easier and faster than copying the object itself and worrying about multiple copies of your object in your program. Well, I have to mention, it's not all that simply really - but it shall serve us as a starting point. OutputUnit* outputUnit = new JackOutputUnit("audioout", 1, "system"); - will create an output unit using Jack (which hopefully you have installed by now according to the instructions in the Installation section). In our example, we just make use of 1 channel for output. Let's now connect these two units, which can easily be achieved by the following statement: oscil->connect( outputUnit); Please note that the object names, not the internal names are used for this purpose. We are basically accessing the connect() method that belongs to the WaveTableOscil object and sending the pointer to the outputUnit as argument. Since the WaveTableOscil object "oscil" is a pointer itself, the method must be accessed using "->" instead of just a "." (e.g. myNormalObject.someMethod() will become myNormalObjectPOINTER->someMethod()). Then it's time to wake up the synth we have initialized before the try{} clause, which we will then let run for 10 seconds (10000 miliseconds) and then stop again. This is done effortlessly by stating synth.start(); then let the program sleep for 10 seconds and sum it up with the statement synth.stop(). After the synth has stopped, therer are two clean-up statements, which we won't elaborate upon. ISOSynth Units Almost all complex systems benefit from a certain deconstruction into smaller units, each of which focussing on a specific sub-task. However, that's not the only reason, units are being used as fundamental building blocks for ISOSynth. One of the main reasons is, that almost all music synthesis programming languages are based on units - and if we look at hardware synthesizers, so are they. The ISOSynth library consists of a huge set of built-in units and continues to grow as we progress. All units function in a similar fashion, because hey all inherit their core functionality from the same object. A unit consists of inputs and/or outputs, hence building three fundamental types:

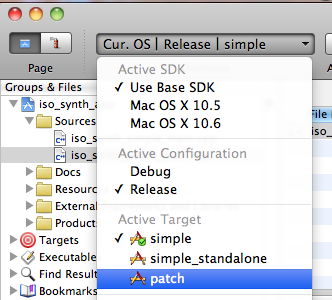

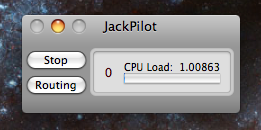

My First Patch Now that we have seen the fundamental steps in creating connecting and listening to the output of ISOSynth units, it's time we get a little bit into the mechanics of an actual synth patch. When you decide to do a patch instead of simply putting your code in a main() method, you will have several advantages. First of all, patches can be reused easily lateron, you can even nest a patch you have created within another patch, switch it on and off remotely or create several of them, each one possibly with different patch parameters. It also makes creating your own ISOSynth object much more manageble since you don't always have to edit the contents of your main() file. Instead your patch will be an encapsulated object, which you will keep for later use. Inside your sources folder in your iso_synth_app example XCode project, you will find a file called "iso_synth_patch_main.cpp" next to the iso_synth_simple_main.cpp (which we looked at as part of our Hello World example). This iso_synth_patch_main.cpp not only contains a simple (almost helloworldish) patch example but also a main() method, which we just learned we need as starting point. The compiler has to be instructed which source code file contains the main() method he should use as starting point, this is done by creating a new target, or in our example: select the active target "patch" by choosing it from the Overview drop-down menu.  If you hit the Build and Run button, this active target will be compiled and executed. Now, chances are, you will hear an audio result immediately. However we do advice you to start your Jack Pilot, which should be located in your Applications folder if you've followed our instructions. If JackPilot is running your audio playback out of ISO Synth will be much more stable!  Why bother to do a patch There are several good reasons, why you should make it a habit to build patches instead of just writing everything in the ISOSynth main() method.

How to Avoid Distortion In order to maximize your patch's sound quality, please make sure you're output amplitudes don't exceed the 1/-1 range. However this doesn't mean, you have to watch every output of every object in your patch. Some objects need to have a large output amplitude range, like for example a modulator object for an FM carrier oscillator. The numerical limits are at +/- (2 ^ 24), which is about 16 and a half million. Distortion is only introduced if Jack (the sound output object) receives a signal outside this range. If necessary you can scale down the output of the last object before the output by using the "outputGain" ControlPort or by introducing a dedicated MultiplyUnit which will do the same job. propablyClipped->connect(outputUnit);

probablyClipped->set("outputGain", 0.8);

** or: **

MultiplyUnit volumeControl = new MultiplyUnit();

probablyClipped->connect(volumeControl);

volumeControl->connect(outputUnit);

volumeControl->set("operand", 0.8);

This notion of having to be concerned with clipping only at the last unit of your patch has its advantages when dealing with a huge number of units. If you can avoid it, don't normalize the amplitude of each individual unit, but set the "outputGain" SwitchPort of the last unit accordingly. You will notice, that even enormous normalization factors like 0.05 produce a decent result without resolution problems. OutputUnit* outputUnit = new JackOutputUnit(1, "Aggregate");

ProcessUnit* sum = new ProcessUnit(1);

SawtoothUnit** oscs = new SawtoothUnit*[n];

for (int i = 0; i < 300; ++i) {

oscs[i] = new SawtoothUnit("sinewave",1);

oscs[i]->set("frequency", 100.0 + static_cast<sample>(i*2)); //linear increasing freq

oscs[i]->connect(sum); //will eventually exceed amp of 1.0 by far (clipping).

}

sum->set("outputGain", 0.05); //normalization

sum->connect(outputUnit);

There is of course one catch, when using amplitudes in a patch that require normalization at the bottom: While the very nature of a floating point number makes sure the resolution scale stays more or less constant, you cannot add a very big number like 10^12 to a very small one like 10^-10. Well, you can, but the small number will be lost, because compared to the big one, it's utterly insignificant - mathematically speaking... |

| Last updated: May 15, 2024 |